Regress x on y4/19/2023

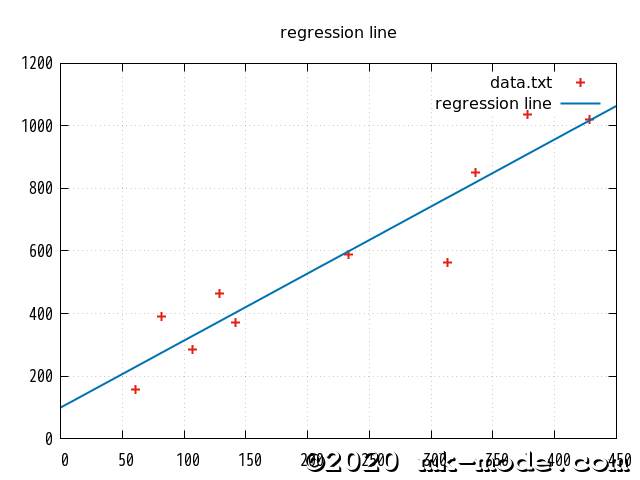

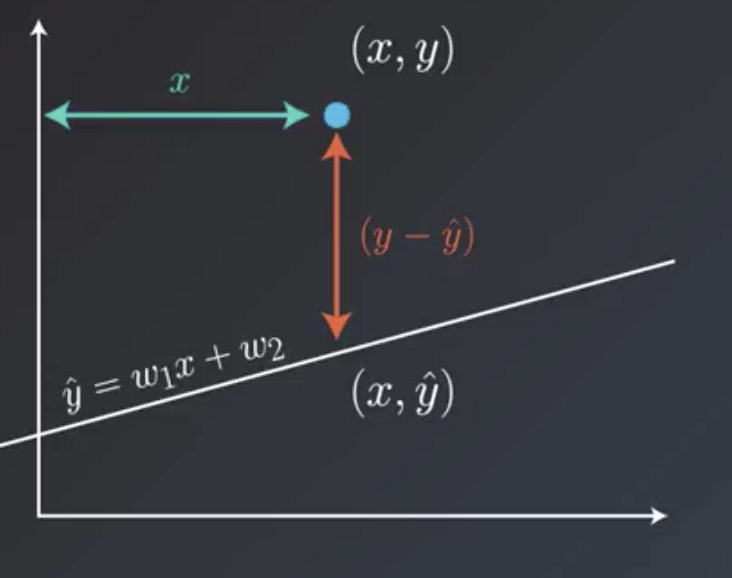

For example, if you wanted to generate a line of best fit for the association between height and shoe size, allowing you to predict shoe size on the basis of a person's height, then height would be your independent variable and shoe size your dependent variable). To begin, you need to add paired data into the two text boxes immediately below (either one value per line or as a comma delimited list), with your independent variable in the X Values box and your dependent variable in the Y Values box. This calculator will determine the values of b and a for a set of data comprising two variables, and estimate the value of Y for any specified value of X. The line of best fit is described by the equation ŷ = bX a, where b is the slope of the line and a is the intercept (i.e., the value of Y when X = 0).

Examples using sklearn.linear_model.This simple linear regression calculator uses the least squares method to find the line of best fit for a set of paired data, allowing you to estimate the value of a dependent variable ( Y) from a given independent variable ( X). Returns : self estimator instanceĮstimator instance. Parameters : **params dictĮstimator parameters. Possible to update each component of a nested object. The method works on simple estimators as well as on nested objects This influences the score method of all the multioutput Multioutput='uniform_average' from version 0.23 to keep consistent The \(R^2\) score used when calling score on a regressor uses sample_weight array-like of shape (n_samples,), default=None y array-like of shape (n_samples,) or (n_samples, n_outputs) Is the number of samples used in the fitting for the estimator. (n_samples, n_samples_fitted), where n_samples_fitted Kernel matrix or a list of generic objects instead with shape For some estimators this may be a precomputed

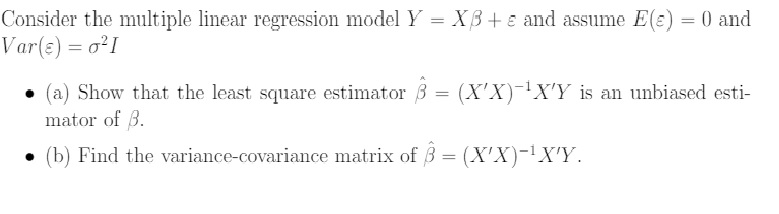

Parameters : X array-like of shape (n_samples, n_features) The expected value of y, disregarding the input features, would getĪ \(R^2\) score of 0.0. The best possible score is 1.0 and it can be negative (because the Is the total sum of squares ((y_true - y_an()) ** 2).sum(). Sum of squares ((y_true - y_pred)** 2).sum() and \(v\) Parameters : X )\), where \(u\) is the residual Return the coefficient of determination of the prediction.įit ( X, y, sample_weight = None ) ¶įit linear model. array ()) 3 > reg = LinearRegression (). > import numpy as np > from sklearn.linear_model import LinearRegression > X = np. Option is only supported for dense arrays.

When set to True, forces the coefficients to be positive. N_targets > 1 and secondly X is sparse or if positive is set Speedup in case of sufficiently large problems, that is if firstly matlab regression linear-regression Share Follow edited at 8:51 Dan 44. I tried with this code : b regress (Y,X) But it gives me this error : Error using > regress at 65 The number of rows in Y must equal the number of rows in X. The number of jobs to use for the computation. I want to regress Y on X (simple linear regression). If True, X will be copied else, it may be overwritten. To False, no intercept will be used in calculations Whether to calculate the intercept for this model. Parameters : fit_intercept bool, default=True The dataset, and the targets predicted by the linear approximation. To minimize the residual sum of squares between the observed targets in LinearRegression fits a linear model with coefficients w = (w1, …, wp) Ordinary least squares Linear Regression. LinearRegression ( *, fit_intercept = True, copy_X = True, n_jobs = None, positive = False ) ¶ Sklearn.linear_model.LinearRegression ¶ class sklearn.linear_model.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed